Microsoft Set to Unveil Its Latest AI Chip, Codenamed ‘Athena,’ Next Month

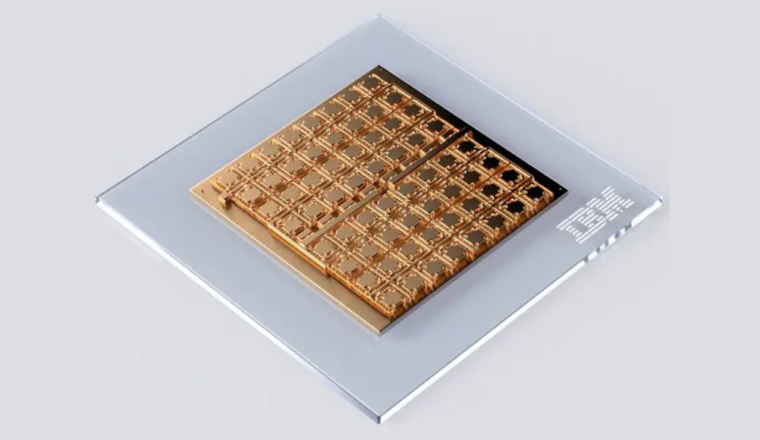

After years of development, Microsoft is on the cusp of revealing its highly-anticipated AI chip, codenamed ‘Athena,’ at the upcoming annual ‘Ignite’ event next month. This unveiling marks a significant milestone for the tech giant, as it signals a potential shift away from its reliance on GPUs manufactured by NVIDIA, the dominant player in the semiconductor industry.

Microsoft has meticulously crafted its Athena chip to empower its data center servers, tailoring it specifically for training and running large-scale language models. The motivation behind this endeavor stems from the ever-increasing demand for NVIDIA chips to fuel AI systems. However, NVIDIA’s chips are notorious for being both scarce and expensive, with its most powerful AI offering, the H100 chip, commanding a hefty price tag of $40,000.

By venturing into in-house GPU production, Microsoft aims to curb costs and bolster its cloud computing service, Azure. Notably, Microsoft had been covertly working on Athena since 2019, coinciding with its $1 billion investment in OpenAI, the visionary organization behind ChatGPT. Over the years, Microsoft has allocated nearly $13 billion to support OpenAI, further deepening their collaboration.

Athena’s Arrival: Microsoft’s In-House AI Chip Ready for the Spotlight

Besides advancing its own AI aspirations, Microsoft’s chip could potentially aid OpenAI in addressing its own GPU requirements. OpenAI has recently expressed interest in developing its AI chip or potentially acquiring a chipmaker capable of crafting tailored chips for its unique needs.

This development holds promise for OpenAI, especially considering the colossal expenses associated with scaling ChatGPT. A Reuters report highlights that expanding ChatGPT to a tenth of Google’s search scale would necessitate an expenditure of approximately $48.1 billion for GPUs, along with an annual $16 billion investment in chips. Sam Altman, the CEO of OpenAI, has previously voiced concerns about GPU shortages affecting the functionality of his products.

To date, ChatGPT has relied on a fleet of 10,000 NVIDIA GPUs integrated into a Microsoft supercomputer. As ChatGPT transitions from being a free service to a commercial one, its demand for computational power is expected to skyrocket, requiring over 30,000 NVIDIA A100 GPUs.

Microsoft’s Athena: A Potential Game-Changer in the Semiconductor Race

The global chip supply shortage has only exacerbated the soaring prices of NVIDIA chips. In response, NVIDIA has announced the upcoming launch of the GH200 chip, featuring the same GPU as the H100 but with triple the memory capacity. Systems equipped with the GH200 are slated to debut in the second quarter of 2024.

Microsoft’s annual gathering of developers and IT professionals, ‘Ignite,’ sets the stage for this momentous revelation. The event, scheduled from November 14 to 17 in Seattle, promises to showcase vital updates across Microsoft’s product spectrum.