Nvidia Unveils ‘Chat with RTX’ Next Game-Changer in AI Technology

Nvidia is once again making waves in the tech world with its latest innovation: ‘Chat with RTX.’ Fresh off the success of their RTX 2000 Ada GPU launch, Nvidia is now venturing into the realm of AI-centric applications, and the early buzz surrounding ‘Chat with RTX’ is hard to ignore, especially among users with Nvidia’s RTX 30 or 40 series graphics cards.

Yesterday, Nvidia had heads turning with the introduction of the RTX 2000 Ada GPU. Today, they’re back in the spotlight with ‘Chat with RTX,’ an application designed to harness the power of newer Nvidia graphics cards, specifically the RTX 30 or 40 series.

If you’re onboard the tech train, get ready for an immersive AI experience that puts your computer in control of handling complex AI tasks effortlessly.

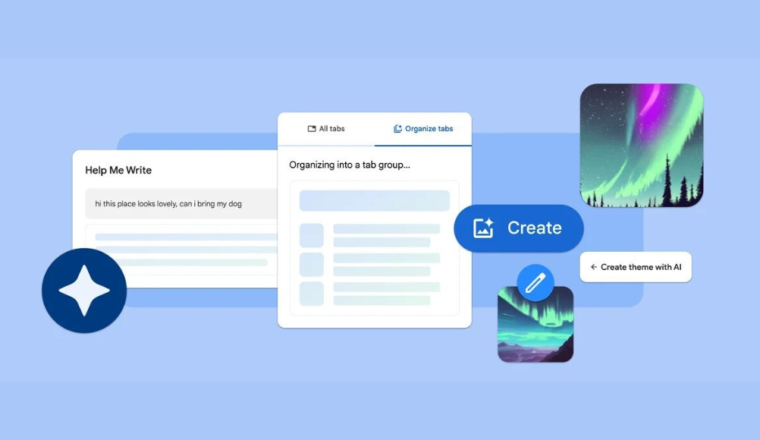

This groundbreaking application transforms your computer into a powerhouse, seamlessly managing the heavy lifting of AI-related functions. It is custom-built for tasks ranging from analyzing YouTube videos to deciphering dense documents.

The best part? You only need an Nvidia RTX 30 or 40-series GPU to embark on this AI adventure, making it an irresistible proposition for those already equipped with Nvidia’s latest graphics technology.

Time-Saving Capabilities with ‘Chat with RTX’

The allure of this lies in its potential to save time, particularly for individuals dealing with vast amounts of information. Imagine swiftly extracting the essence of a video or pinpointing crucial details within a stack of documents.

Its aims to be your go-to AI assistant for such scenarios, joining the ranks of other prominent chatbots like Google’s Gemini or OpenAI’s ChatGPT, but with the distinctive Nvidia touch.

However, let’s not overlook its imperfections. When functioning optimally, ‘Chat with RTX’ adeptly guides you through critical sections of your content. Its true prowess shines when tackling documents – effortlessly navigating PDFs and other files, extracting vital details almost instantaneously.

For anyone familiar with the overwhelming task of sifting through extensive reading material for work or school, ‘Chat with RTX’ could be a game-changer.

Yet, like any innovation, ‘Chat with RTX’ is a work in progress. Setting it up requires patience, and it can be resource-intensive. Some wrinkles still need smoothing out – for instance, it struggles with retaining memory of previous inquiries, necessitating starting each question anew.

Nevertheless, given Nvidia’s pivotal role in the ongoing AI revolution, these quirks are likely to be addressed swiftly as ‘Chat with RTX’ evolves.

Looking Ahead: The Future of AI Interaction

As we eagerly await the refinement of ‘Chat with RTX,’ the application provides a glimpse into the future of AI interactions. Nvidia, renowned for its trailblazing efforts in the AI field, appears poised to push the boundaries further and shape the future of AI assistance.

While ‘Chat with RTX’ may have some rough edges at present, it represents a promising stride forward in AI integration. Keep an eye out as Nvidia continues to lead the charge in driving innovation. Stay tuned for updates on ‘Chat with RTX’ and the exciting possibilities it holds.