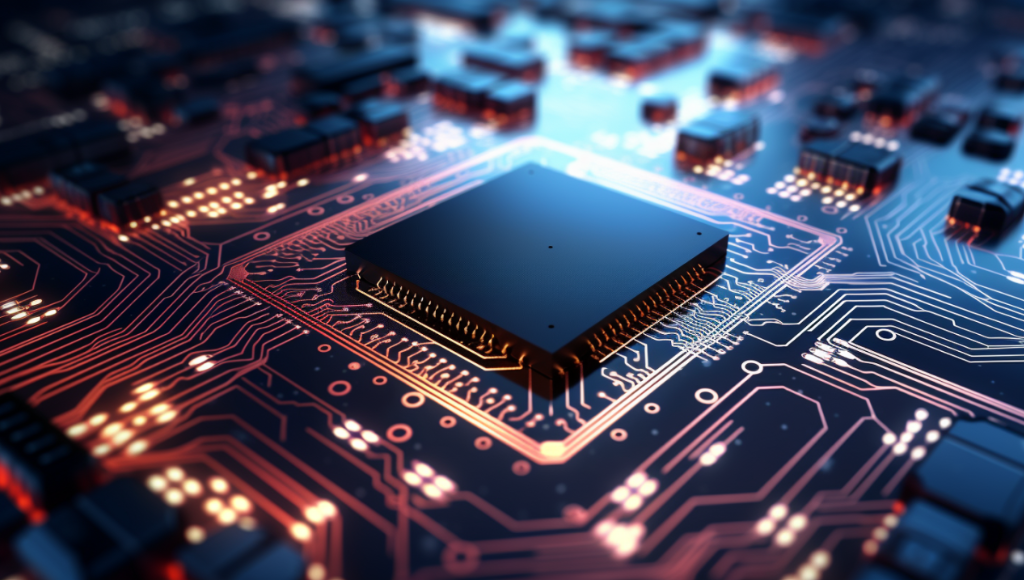

Securing Graphics Processing Units (GPUs) has become a paramount concern for companies harnessing the power of artificial intelligence (AI). The market for these essential chips is highly constrained, largely controlled by a select few semiconductor giants. Notably, OpenAI, renowned as the creator of ChatGPT, finds itself in this quandary. However, recent reports suggest that OpenAI is actively exploring options to address this challenge, potentially reshaping its AI hardware strategy.

OpenAI, a trailblazing force in the realm of AI, is considering the development of its own proprietary AI chip or even the acquisition of a chip manufacturing company, as per insights shared by Reuters. Presently, OpenAI, like many of its peers, relies heavily on AI chips provided by NVIDIA, particularly the A100 and H100, which are highly sought-after in the industry. This reliance has led OpenAI to amass a formidable arsenal of GPUs, a status aptly described as being “GPU-rich” by Dyan Patel in a recent blog post. This status signifies OpenAI’s access to extensive computing power, a crucial resource in the AI landscape.

The flagship product of OpenAI, ChatGPT, relies on a staggering 10,000 high-end NVIDIA GPUs to operate efficiently. However, this entails significant expenses, and in recent times, both NVIDIA and AMD have hiked the prices of their chips and graphics cards. These escalating costs have prompted OpenAI to seek more sustainable alternatives.

The Cost of AI: OpenAI’s Financial Perspective

According to a report by Reuters, each query processed by ChatGPT costs OpenAI approximately 4 cents, according to Bernstein analyst Stacy Rasgon. Extrapolating this to a scale approaching a fraction of Google search’s magnitude would necessitate OpenAI to allocate a staggering $48.1 billion for GPU procurement and an additional $16 billion annually for chip maintenance. In light of this, OpenAI’s exploration into in-house chip production becomes a financially pragmatic choice.

OpenAI’s CEO, Sam Altman, has been vocal about the GPU shortage and its impact on the company’s operations. Altman, in a blog post archived by Raza Habib, CEO of London-based AI firm Humanloop, acknowledged challenges such as slow API speeds and reliability issues, attributing many of these difficulties to GPU shortages. Habib also revealed Altman’s vision of a more cost-effective and efficient GPT-4, emphasizing OpenAI’s commitment to lowering the cost of AI services over time.

Exploring the Possibility of OpenAI Hardware Products

According to sources cited by Reuters, OpenAI is contemplating the acquisition of a chip manufacturing company, mirroring a strategy adopted by Amazon when it acquired Annapurna Labs in 2015. Nevertheless, a report by The Information hints at a more ambitious move by OpenAI, suggesting that the company may venture into hardware products. This report mentions discussions between Sam Altman and Jony Ive, the former Chief Design Officer at Apple, to potentially develop an iPhone-like AI device.

Remarkably, SoftBank CEO and investor Masayoshi Son has reportedly expressed interest in investing $1 billion in OpenAI to support the development of this revolutionary AI product, often dubbed as the ‘iPhone of AI.’ Such a collaboration between two influential entities holds the promise of transforming the AI landscape and making advanced AI accessible to a broader audience.

In conclusion, OpenAI is at the forefront of addressing the GPU scarcity challenge that has plagued the AI industry. Their pursuit of in-house chip production and potential forays into hardware products mark a bold step toward enhancing AI accessibility, affordability, and performance in the future.