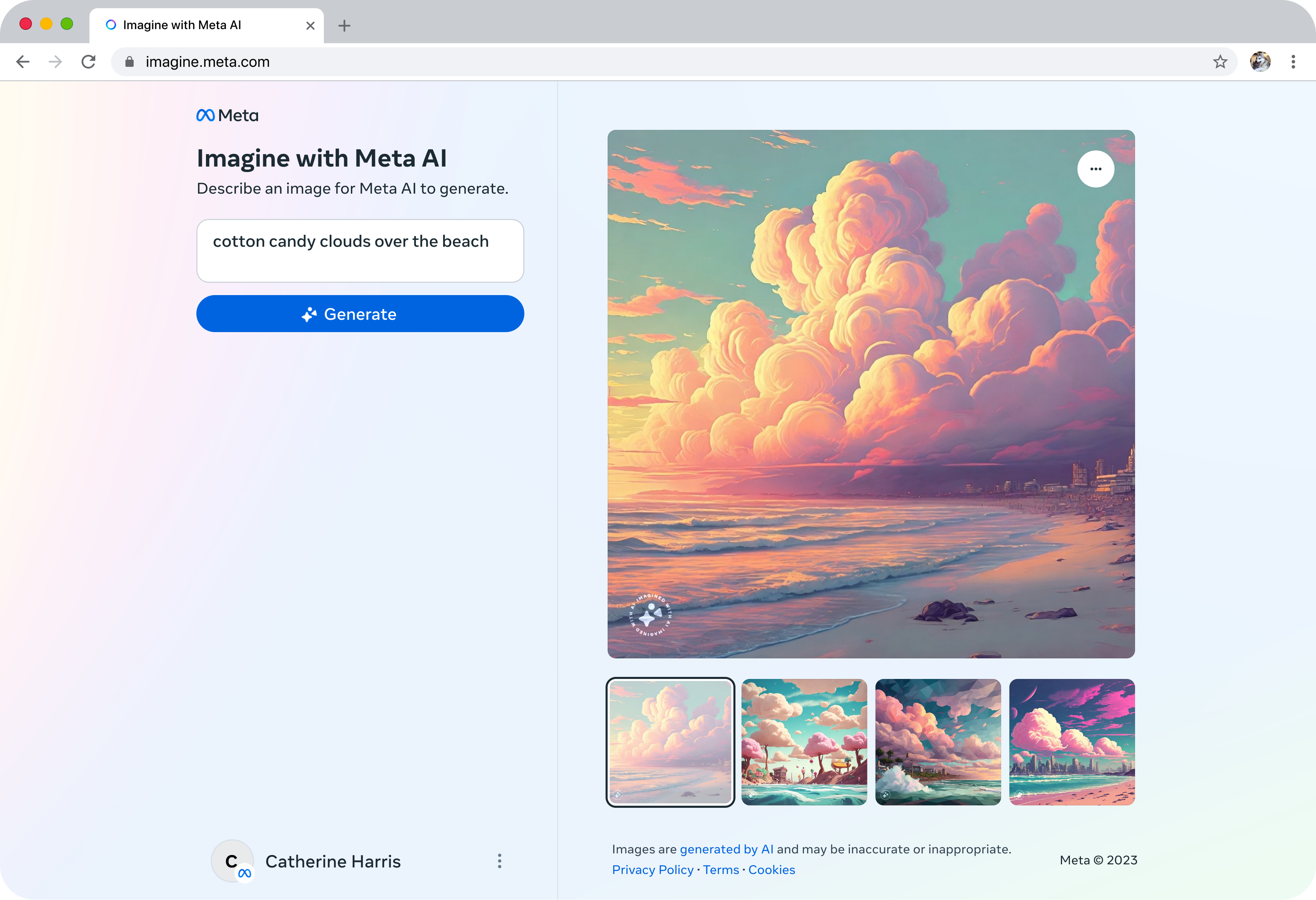

In response to Google’s Gemini launch, Meta is introducing a standalone generative AI experience called Imagine with Meta on the web. This innovative tool enables users to generate images by describing them in natural language, similar to OpenAI’s DALL-E, Midjourney, and Stable Diffusion. Imagine with Meta utilizes Meta’s Emu image generation model to create high-resolution images from text prompts. Currently available for free in the U.S., it generates four images per prompt.

Meta acknowledges the positive feedback from users who have employed Imagine in their chats for creative content. The company is now expanding access beyond chats, allowing users to freely create images on the web. However, Meta’s previous experiences with image generation tools, such as the racially biased AI sticker generator, raise concerns about the potential risks associated with Imagine with Meta.

While the writer wasn’t provided with an opportunity to test the tool prior to its launch, there is a commitment from Meta to introduce safeguards. In the coming weeks, Meta plans to add invisible watermarks to content generated by Imagine with Meta for increased transparency and traceability. These watermarks, generated by an AI model, will be detectable with a corresponding model, though it remains unclear whether the detection model will be made public.

Meta emphasizes the resilience of these watermarks to common image manipulations, including cropping, resizing, color changes, screenshots, image compression, noise, and sticker overlays. The company aims to implement invisible watermarking across various products with AI-generated images in the future.

Notably, the use of watermarks for generative art is not a new concept. Several companies, such as Imatag and Steg.AI, offer watermarking tools resistant to image edits. Microsoft and Google have also adopted AI-based watermarking standards, while platforms like Shutterstock and Midjourney adhere to guidelines indicating content created by generative AI tools.

The growing pressure on tech firms to clarify whether works were generated by AI is evident, particularly in the wake of concerns about deepfakes from the Gaza war and AI-generated child abuse images. China’s Cyberspace Administration has issued regulations mandating generative AI vendors to mark generated content without affecting user usage, and U.S. Senator Kyrsten Sinema has emphasized the importance of transparency in generative AI, including the use of watermarks, during Senate committee hearings.